After waiting for almost 6 months from when it launched, and a month after I placed the order I am now a proud owner of a RaspberryPi 🙂 For those of you who are wondering what on earth I am talking about, its a computer the size of a phone (see pic below comparing it with my old Nokia N95) costing $35 that is powerful enough to play Quake3. Its amazing how small this thing is and the features they have managed to cram on to the box. It was delivered yesterday and I was a bit upset at the customs duty I had to pay on the device (paid about 50% of the cost of the device + the cost of shipping as duty) but it still turns out to be a lot cheaper than any other contender.

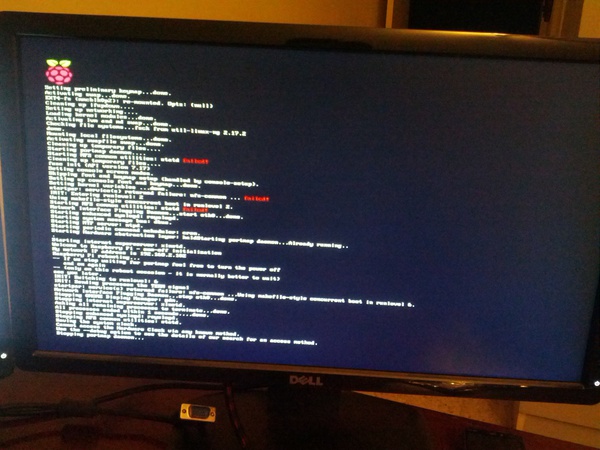

I was really excited to work on it but when I got home and started to set it up I found that my SD card reader/writer was no longer functioning 🙁 so after a few hours of trying and turning the house upside-down for the other card reader that I know I have and just couldn’t find, I finally gave up and messaged Krishna at 12:30am asking him to bring a SD Card reader with him to the office (which he did, thanks!) the next day. Had to wait a day to get back home and once I got home with the reader I then downloaded the Debian image to my computer and wrote it to the card, powered the system with my old blackberry charger, plugged in an Ethernet cable and a HDMI cable (actually HDMI to DVI cable if you want to be picky) connecting the Pi to my second monitor. That’s when I hit a snag. Turns out that I don’t have a single USB keyboard at home, all my keyboards are PS/2. 🙁 So now I either need to borrow a USB keyboard or go buy a small one. In any case I powered the Pi up to see if it works ok and it powered up fine.

The first boot took about a min, but after that the system gets to the login prompt in about 15 secs, which is pretty cool. I can reduce the boot time further by disabling services that I know I won’t use (like NFS etc). Unfortunately SSH wasn’t enabled on the box, so without a keyboard and no remote connection I couldn’t really do anything more at this time, but I am full of idea’s for this device.

Below are some pics of the Pi in action:

Comparison shot of the RaspberryPi next to a Nokia N95

The RaspberryPi hooked up and ready for action

Initial Boot Sequence of RaspberryPi

I wanted to take a comparison shot of the Pi next to my Galaxy Nexus but I was using the Nexus to take the photos (didn’t feel like pulling out the camera, take a pic, take out the card and then upload the pics as compared to; take the photo, FTP to computer).

Well this is all for now, am a bit sad, but still excited. Keep an eye here for more on the Pi and my experiments with it.

– Suramya