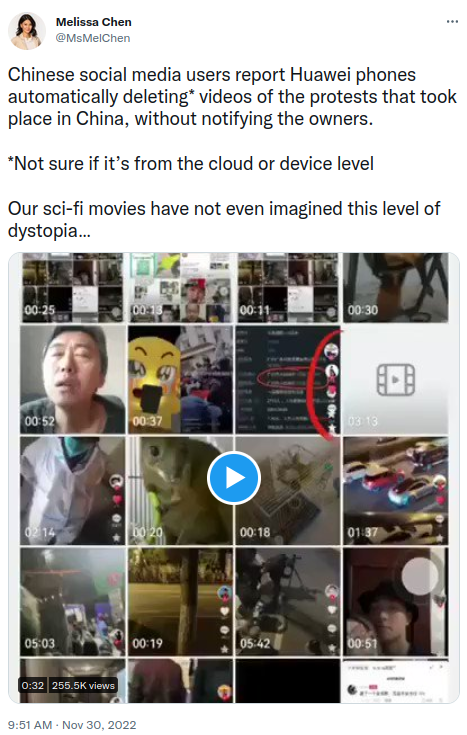

There is an interesting piece of news that is slowly spreading over the internet in the past few hours where Melissa Chen is claiming over at Twitter that Huawei phones are automatically deleting videos of the protests that took place in China, without notifying their owners. Interestingly I was not able to find any other source reporting this issue. All references/reports of this issue are linking back to this tweet and based on this single tweet that is not supported by external validation. Plus the tweet does not even provide enough information to validate that this is happening other than a single video shared as part of the original tweet.

Melissa Chen claiming on Twitter that videos of protests are being automatically deleted by Huawei without notification

However, it is an interesting exercise to think how this could have been accomplished, what the technical requirements for this to work would look like and if this is something that would happen. So lets go ahead and dig in. In order to delete a video remotely, we would need the following:

- The capability to identify the videos that need to be deleted without impacting other videos/photos on the device

- The capability to issue commands to the device remotely that all sensitive videos from xyz location taken at abc time need to be nuked and Monitor the success/failure of the commands

- Identify the devices that need to have the data on the looked at. Keeping in mind that the device could have been in airplane mode during the filming

Now, lets look at how each of these could be accomplished one at a time.

The capability to identify the videos that need to be deleted without impacting other videos/photos on the device

There are a few ways that we can identify the videos/photos to be deleted. If it was a video from a single source then we could have used a HASH value of the video to identify it and then delete. Unfortunately in this case the video in question is recorded by the device so each video file will have a separate hash value so this is not how we could do this.

The second option is to use the Metadata in the file, to identify the date & time along with the physical location of the video to be deleted. If videos were recorded within a geo-fence area in a specific timeframe then we potentially have the information required to identify the videos in question. The main problem would be that the user could have disabled geo-tagging of photos/videos taken by the phone or the date/time stamp might be incorrect.

One way to bypass this attempt to save the video would be to have the app/phone create a separate geo-location record of every photo/video taken by the device even when GPS is disabled or Geo tagging is disabled. This would require a lot of changes in the OS/App file and since a lot of people have been looking at the code in Huawei phones for issues ever since there was an accusation that they are being used by China to spy on western world, it is hard to imagine this would have escaped from scrutiny.

If the app was saving the data in the video/photo itself rather than a separate location then it should be easy enough to validate by examining the image/video data of photos/videos taken by any Huawei phone. But I don’t see any claims/reports that prove that this is happening.

The capability to issue commands to the device remotely that all sensitive videos from xyz location taken at abc time need to be nuked and Monitor the success/failure of the commands

Coming to the second requirement, Huawei or the government would need the capability to remotely activate the functionality to delete the videos. In order to do this the phone would need to be connecting to a Command & Control (C&C) channel frequently to check for commands. Or the phone would have something listening to remote commands from a central server.

Both of these are hard to disguise and hide. Yes, there are ways to hide data in DNS queries and other such methods to cover the tracks but thanks to Botnets, malware and Ransomware campaigns the ability to identify hidden C&C channels is highly developed and it is hard to hide from everyone looking at this. If the phone has something listening to commands then a scan of the device for open ports/apps listening to connections would be an easy thing to check and even if the app listening is disguised it should be possible to identify that something is listening.

You might say that the commands to activate might be hidden in the normal traffic going to & from the device to the Huawei servers and while that is possible we can check for it by installing a root certificate and passing all the traffic to/from the device via a proxy to be analyzed. Not impossible to do but hard to achieve without leaving signs, and considering the scrutiny these phones are going through hard to accept that this is something that is happening without anyone finding out about it.

Identify the devices that need to have the data on the looked at. (Keeping in mind that the device could have been in airplane mode during the filming)

Next, we have the question on how would Huawei identify the devices that need to run the check for videos. One option would be to issue the command to all their phones anywhere in the world. This would potentially be noisy and there is a possibility that a sharp eyed user catches the command in action. So far more likely option would be for them to issue it against a subset of their phones. This subset could be all phones in China, all phones that visited the location in question around the time the protest happened or all phones that are there in or around the location at present.

In order for the system to be able to identify users in an area, they have a few options. One would be to use GPS location tracking which would require the device to constantly track its location and share with a central location. Most phones already do this. One potential problem would be when users disable GPS on the device but other than that this would be an easy request to fulfill. Another option is to use cell tower triangulation to locate/identify the phones in the area at a given time. This is something that is easily done at the provider side and from what I read quite common in China. Naomi Wu AKA RealSexyCyborg had a really interesting thread on this a little while ago that you should check out.

This doesn’t even account for the fact that China has CCTV coverage across most of its jurisdiction and claim to have the ability to run Facial recognition across this massive amount of video collected. So, it is quite easy for the government to identify the phones that need to be checked for sensitive photos/videos with existing & known technology and ability.

Conclusion/Final thoughts

Now also remember that if Huawei had the ability to issue commands to its phones remotely then they also have the ability to extract data from the phones, or plant information on the phone. Which would be a espionage gold mine as people use their phones for everything and have then with them always. Loosing the ability to do this just to delete videos is not something that I feel China/Huawei would do as harm caused by the loss of intelligence data would far outweigh the benefits of deleting the videos. Do you really think that every security agency, Hacker Collective, bored programmers, Antivirus/cybersec firms would not immediately start digging into the firmware/apps on any Huawei phone once it was known and confirmed that they are actively deleting stuff remotely.

So, while it is possible that Huawei/China has the ability to scan and delete files remotely I doubt that this is the case right now. Considering that there is almost no reports of this happening anywhere and no independent verification of the same plus it doesn’t make sense for China to nuke this capability for such a minor return.

Keeping that in mind this post seems more like a joke or fake news to me. That being said, I might be completely mistaken about all this so if you have additional data or counter points to my reasoning above I would love for you to reach out and discuss this is more detail.

– Suramya