I am not the most fervent fan of emojis in the world and for the most part I have gone through the last 20+ years with about 3-4 emojis that I use on a regular basis. But I know people who communicate wholly using them and while I am ok with folks using them in personal communications I dislike them immensely in professional communications (except for the occasional smiley face). However not everyone agrees with me and there have been books published in the past using just Emoji’s and now there is a programming language that is written entirely in emoji.

Emojicode, which first appeared as a Github project back in 2016 has been around for a while now and has a fairly strong following in the tech world. I realize that I sound like one of the old men screaming ‘Get off my lawn’ but I really don’t understand why anyone would want to code in a language that uses emoji’s to define text as a serious programming language. As a joke it would be fun to learn but I can’t really imagine coming in to work one day and writing code using Emojocode for a work project.

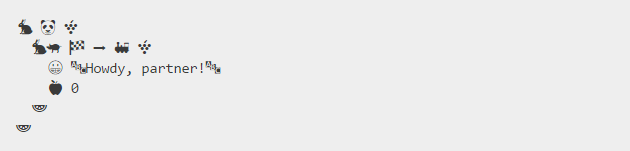

Here’s an example of the “Hello World” program written using Emojicode.

Hello World using Emojicode

If you want to learn coding in Emojicode, you can check out their impressive documentation or do a Code Academy course on the language. Emojicode is open-source, so you can also contribute to the development via their GitHub repository.

Yes, learning new programming languages is cool, but I don’t think I will be spending the effort to learn Emojicode anytime in the near future.

– Suramya